3D Majolica

These twelve models are virtual recreations of objects featured in the Majolica Mania exhibition. To view a model, click the play button on the center of the image below. To view the model in full-screen mode, select the multi-directional arrow button on the bottom right corner of the viewer. Click or tap & hold to rotate the model and use the mouse wheel or pinch gestures to zoom in and out.

Find out more about how these models were created.

These models were developed for educational use with the aim of sharing exhibition objects with a global audience. Although every effort was made to generate accurate representations of these objects, their physical complexities coupled with the use of evolving imaging processes resulted in certain small inconsistencies, often in the recesses and on the bottom of the objects. These areas are marked with a solid grey fill.

Minton & Co., Stoke-upon-Trent, Staffordshire

designed ca. 1867, this example 1872

Earthenware with majolica glazes

11 3/8 x 20 x 8 in. (29 x 51 x 20.5 cm)

Collection of Marilyn and Edward Flower

Griffen, Smith & Co., Phoenixville, Pennsylvania

ca. 1879–90

Earthenware with majolica glazes

H. 6 x diam. 11 3/4 in. (15.2 x 29.8 cm)

Private collection, ex coll. Dr. Howard Silby

Minton & Co., Stoke-upon-Trent, Staffordshire

1868

Earthenware with majolica glazes and metal mounts

13 x 7 3/4 x 6 in. (33 x 19.5 x 15.3 cm)

Joan Stacke Graham Collection

George Jones & Sons, Stoke-upon-Trent, Staffordshire

Design registered 1875

Earthenware with majolica glazes

7 5/8 x 14 3/4 x 7 7/8 in. (19.3 x 37.5 x 20 cm)

Joan Stacke Graham Collection

Johann Hasselmann (John) Hénk (1847–1921), designer

Minton & Co., Stoke-upon-Trent, Staffordshire, manufacturer

ca. 1875

Earthenware with majolica glazes

6 x 13 5/8 x 2 3/4 in. (15.3 x 34.5 x 7 cm)

Joan Stacke Graham Collection

W. T. Copeland & Sons, Stoke-upon-Trent, Staffordshire

ca. 1876

Earthenware with majolica glazes

H. 9 3/4 x diam. 8 5/8 in. (25 x 22 cm)

Joan Stacke Graham Collection

Pierre-Émile Jeannest (1813-1857), designer

Minton & Co., Stoke-upon-Trent, Staffordshire, manufacturer

Designed ca. 1851, this example ca. 1855–60

Earthenware with majolica glazes

H. 24 5/8 x diam. 16 3/8 in. (62.5 x 41.6 cm)

Collection of Marilyn and Edward Flower, ex coll. Dr. Marilyn Karmason

Worcester Royal Porcelain Company, Worcester, Worcestershire

Designed ca. 1868

Earthenware with majolica glazes

Dimensions: 9 1/4 x 6 5/8 x 6 in. (23.4 x 16.8 x 15 cm)

Collection of Marilyn and Edward Flower

T. C. Brown-Westhead, Moore & Co., Hanley, Staffordshire

ca. 1876

Earthenware with majolica glazes

24 7/8 x 14 1/2 x 13 3/8 in. (63.2 x 36.8 x 34 cm)

Collection of Marilyn and Edward Flower

Case: D. F. Haynes & Co., Chesapeake Pottery, Baltimore, Maryland; mechanism: New Haven Clock Company, New Haven, Connecticut

ca. 1883–86

Earthenware with majolica glazes, brass, wood, and other materials

11 7/8 x 10 3/4 x 4 3/8 in. (30.1 x 27.3 x 11.1 cm)

Private collection

Minton & Co., Stoke-upon-Trent, Staffordshire

Designed ca. 1851; this example 1866

Earthenware with majolica glazes

13 1/2 x 19 7/8 x 18 1/2 in. (34.3 x 50.3 x 47 cm)

Joan Stacke Graham Collection

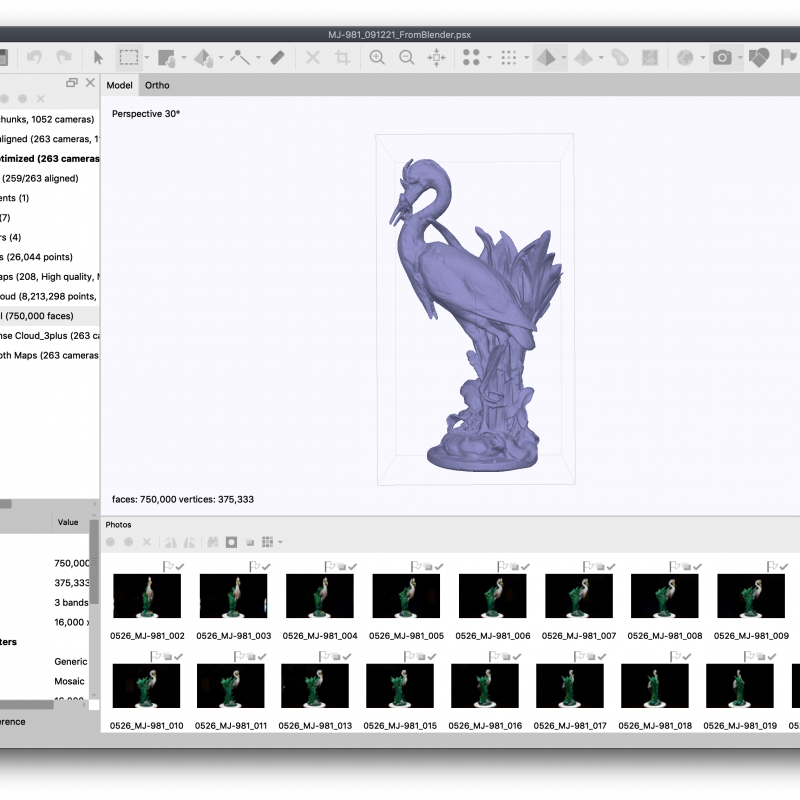

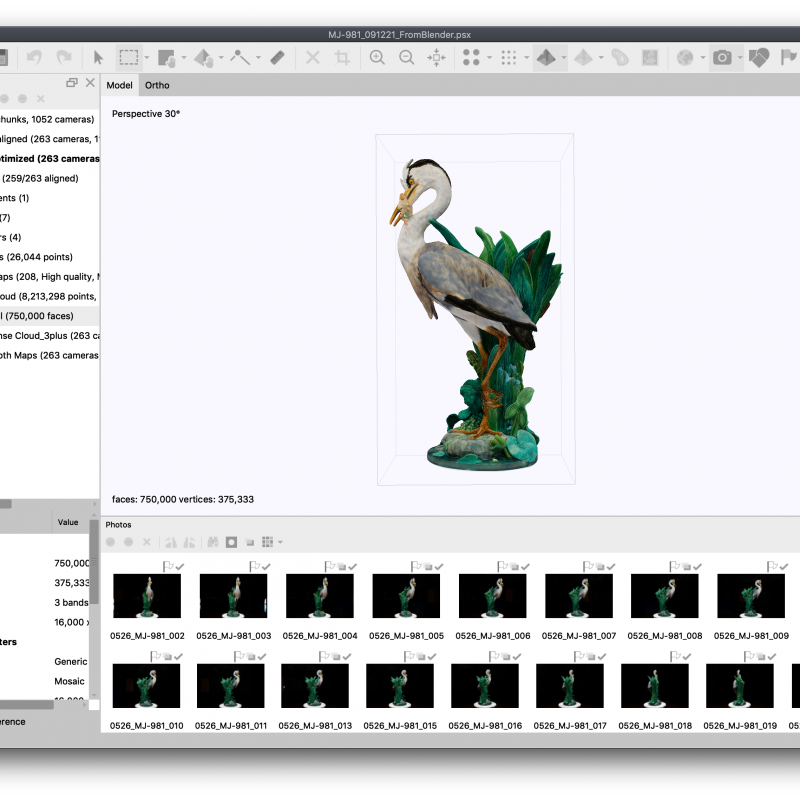

Paul Comoléra (1818–1897), designer

Minton & Co., Stoke-upon-Trent, Staffordshire, manufacturer

Designed ca. 1873–74; this example 1876

Earthenware with majolica glazes

39 1/2 x 21 3/4 x 15 3/4 in. (100.3 x 55.3 x 40 cm)

Joan Stacke Graham Collectio

Making the 3D Objects

These 3D models were created by Sasha Arden, the Rachel and Jonathan Wilf & Andrew W. Mellon Fellow in Time-Based Media Conservation at The Conservation Center, Institute of Fine Arts, New York University, using a process called photogrammetry.

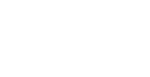

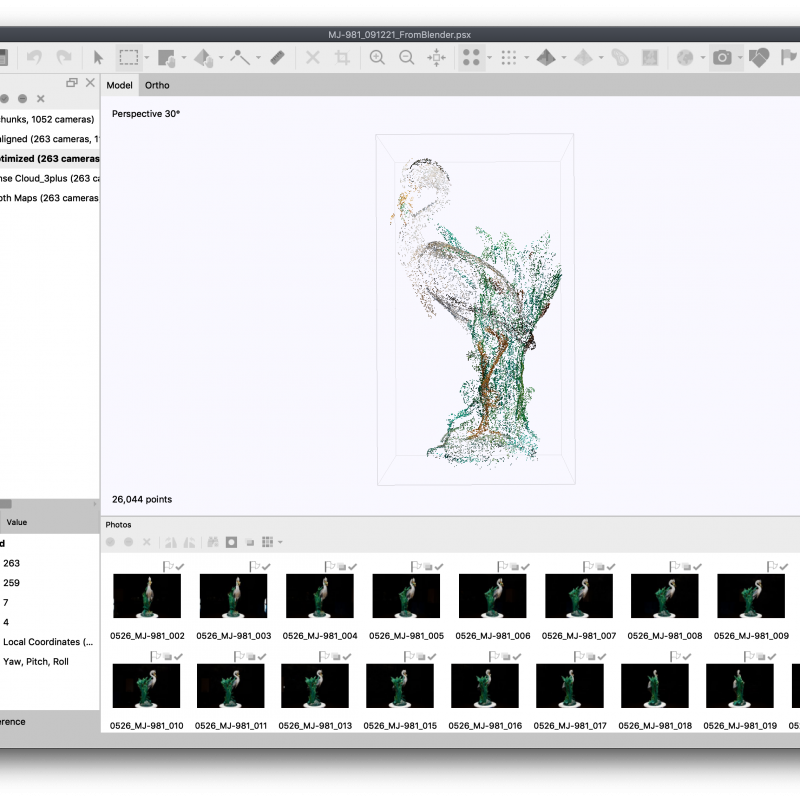

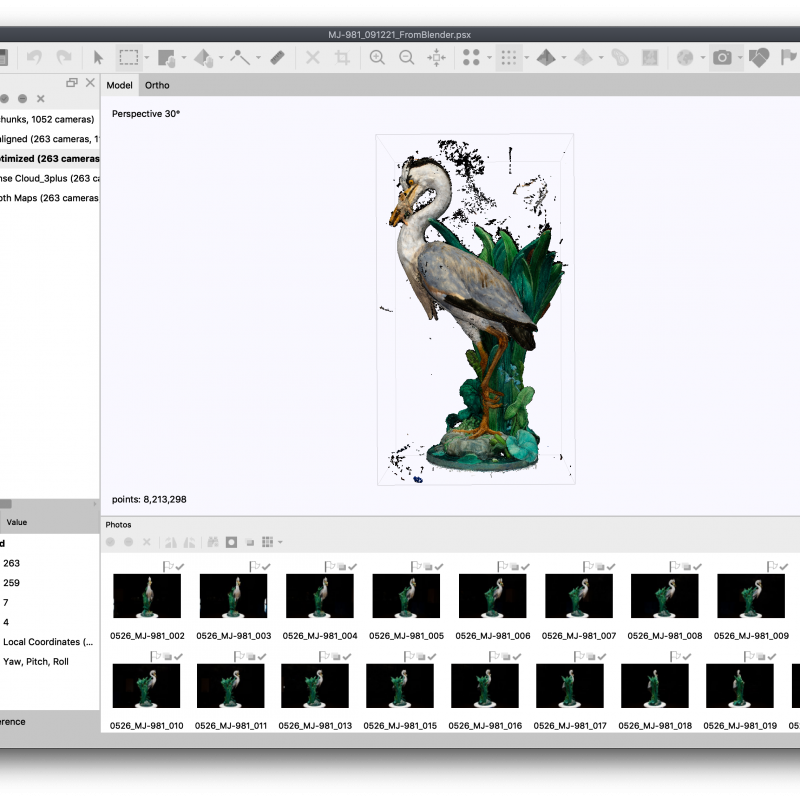

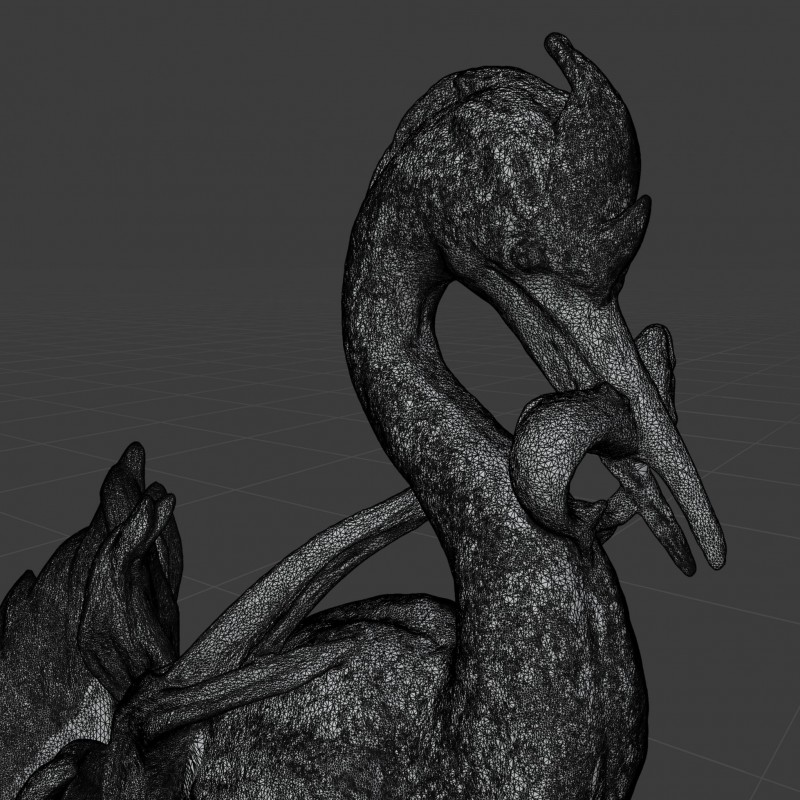

This process begins by taking a series of digital photographs of each object with a high-quality camera and a fixed lens. The camera must capture at least three views of each point on the object in order for it to be included in the 3D model, and the resulting image set for each object can contain hundreds of photographs. Specialized software called Metashape then interprets this image set to generate the first iteration of the 3D model. First, the program analyzes and compares the pixels in every image in the set to identify those points that belong to the object and those that are part of the background. This process yields a “sparse cloud” of the most likely points to comprise the final model, which is then refined over several additional steps to create a “dense cloud.” Based on those points (or based on depth maps derived from an analysis of the image set), a fine mesh of geometric shapes is then created to generate a “solid” form. The mesh often needs to be manually edited in Blender, an open-source 3D-modeling software, to correct the areas where Metashape was unable to accurately interpret the image set. Once those corrections are made, the edited mesh is imported back into Metashape where the model’s “texture” is created using the original image set. This final texture is a composite image drawn from colors of the original object mapped onto the mesh. The final 3D model is then uploaded to a viewing platform where environmental variables like background color and lighting can be adjusted so as to create a faithful representation of the original object.